LVMH

14

min read

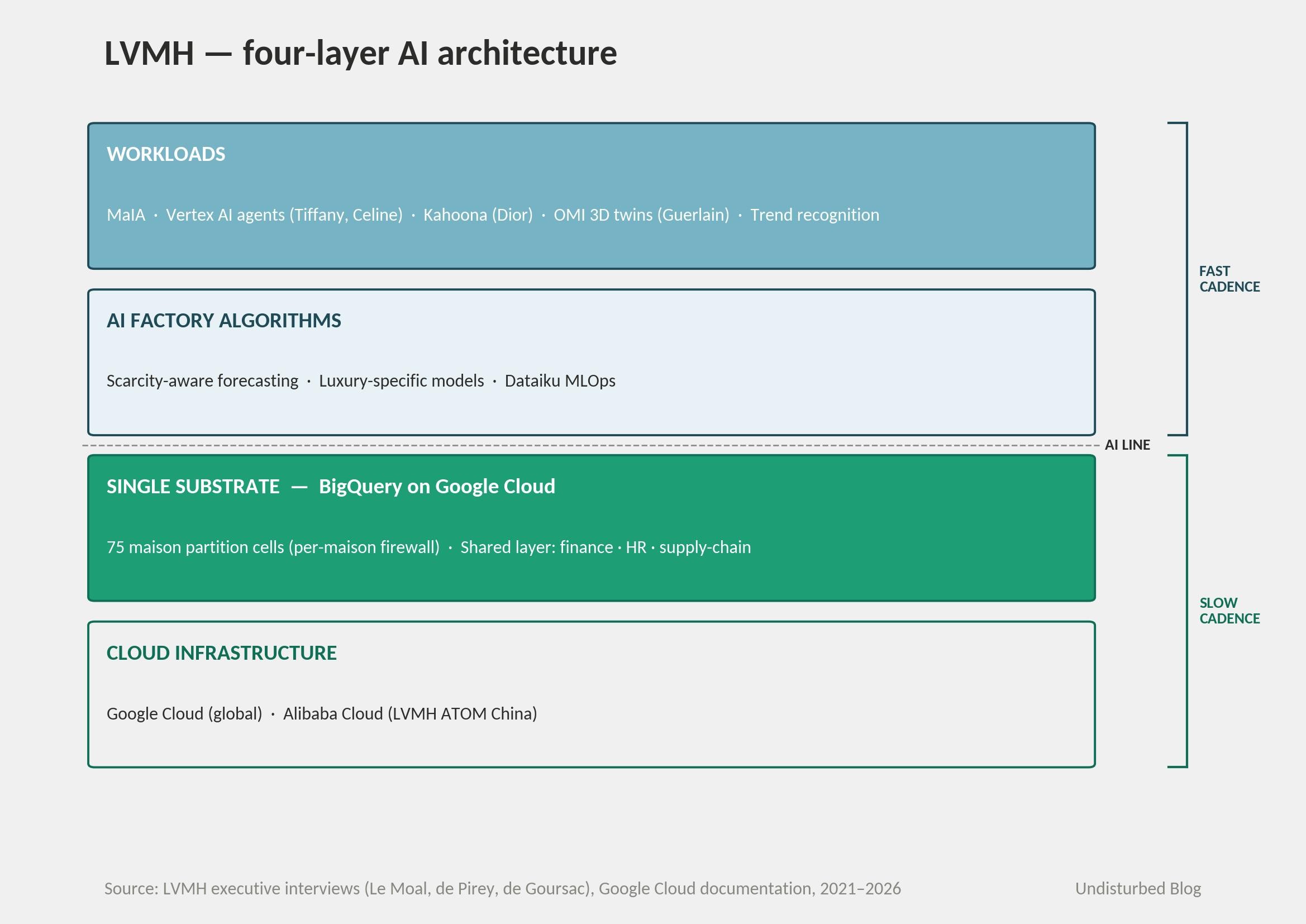

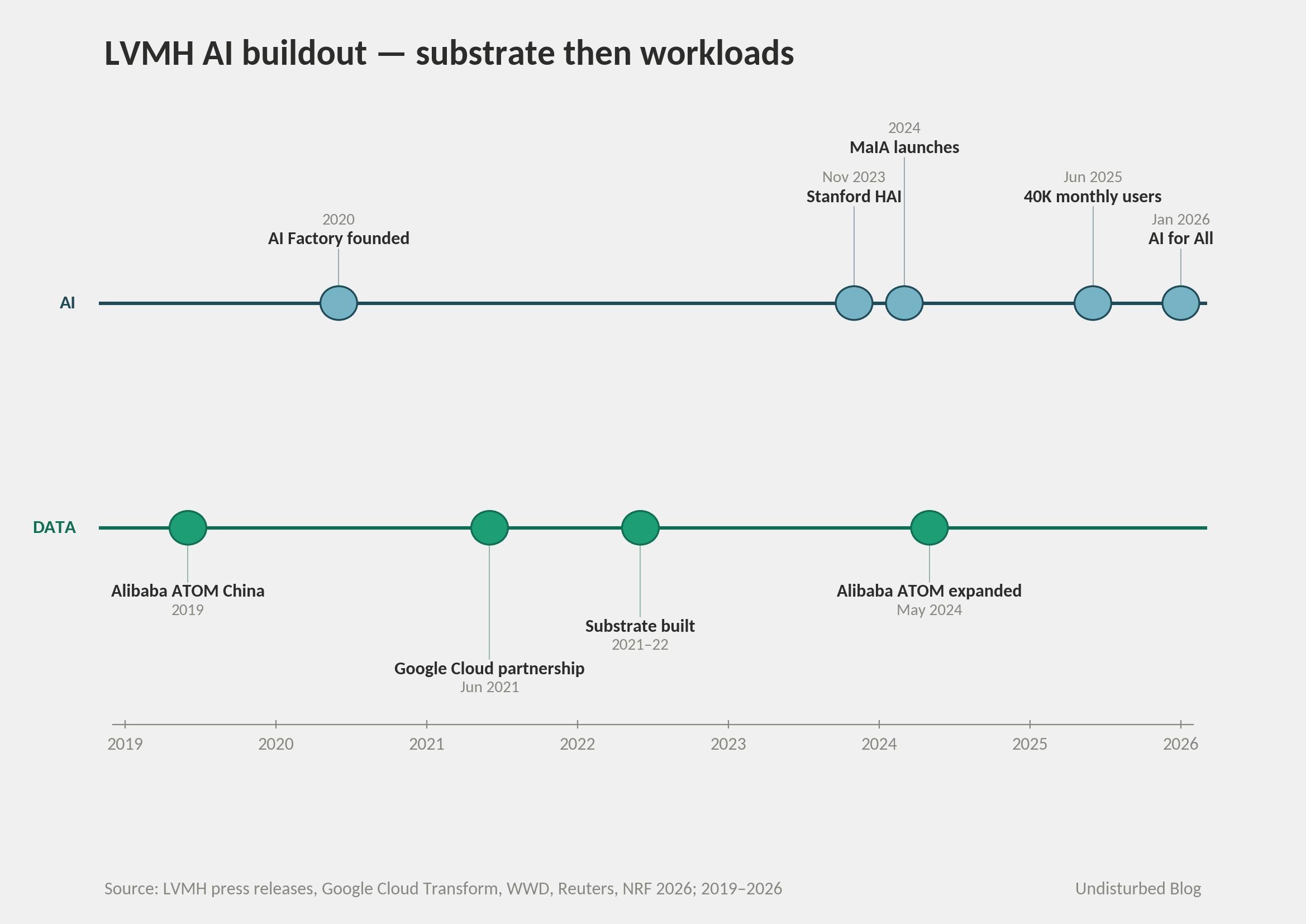

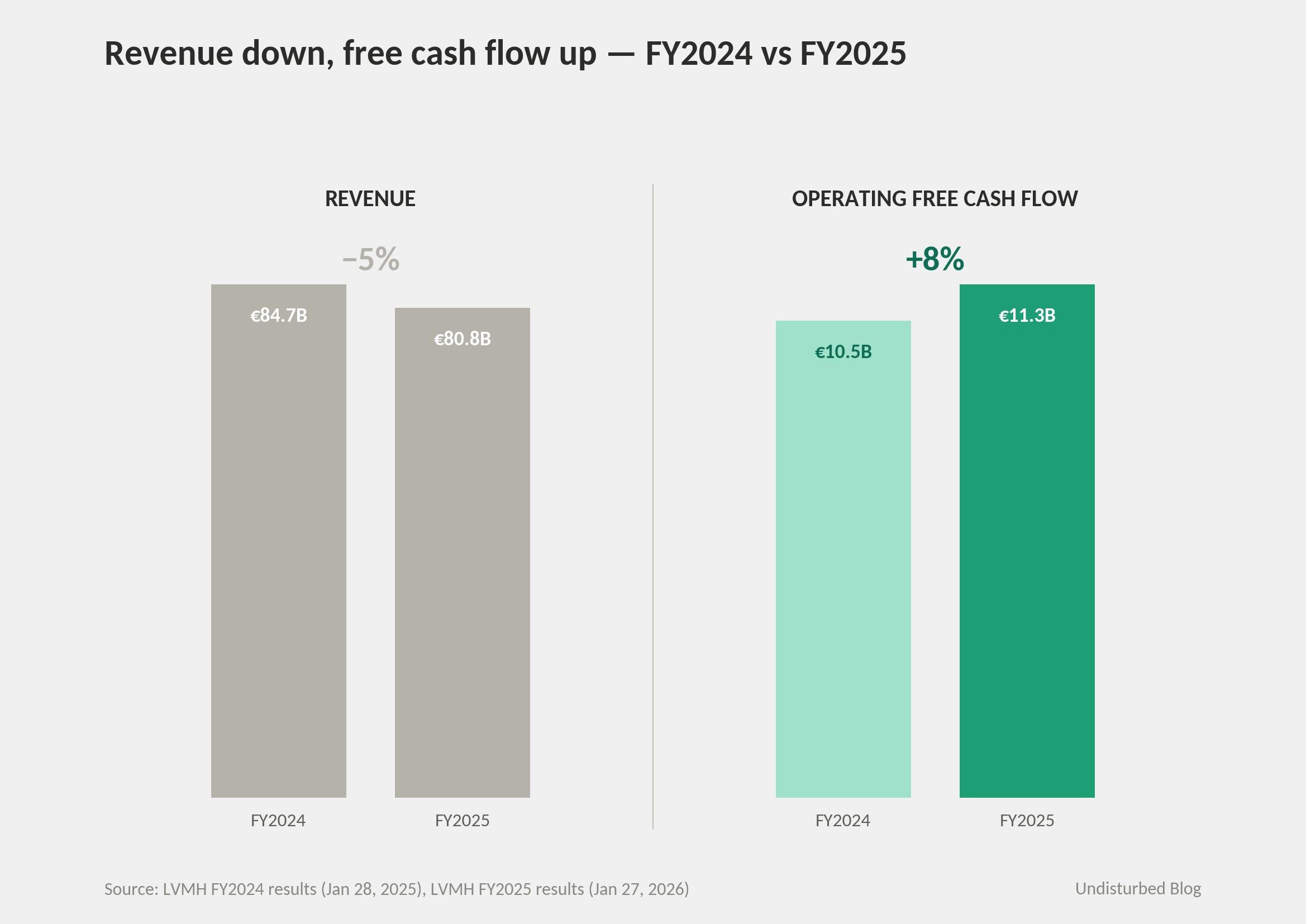

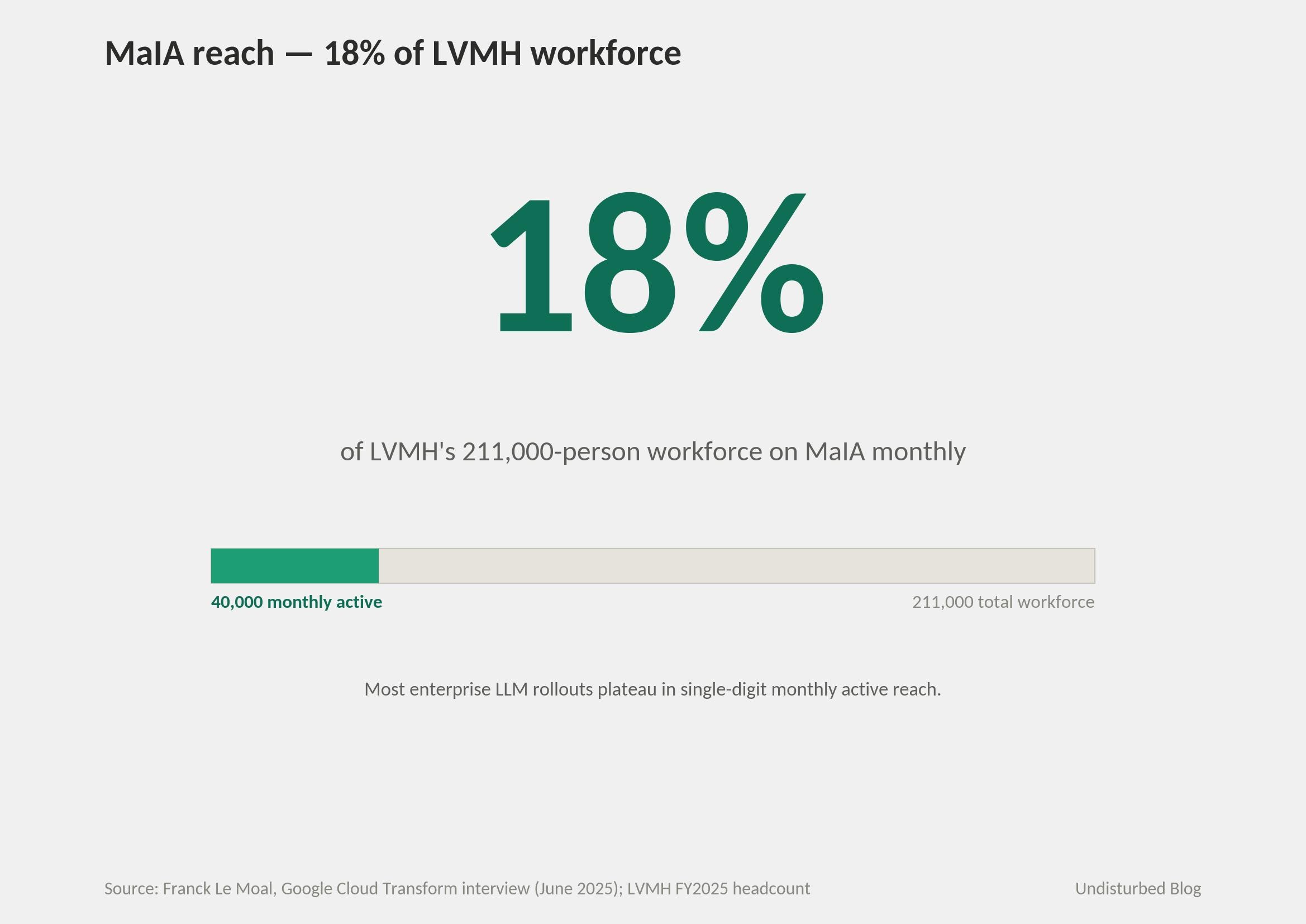

In 2021–22, LVMH built a single Google Cloud data substrate beneath its 75 maisons. The architectural decision that mattered most wasn't centralization. It was the per-maison firewall built into the substrate before any AI workload landed on top. From 2024 the workloads arrived — most visibly MaIA, the group's generative-AI agent, now reaching roughly 40,000 monthly users, about 18% of LVMH's 211,000-person workforce. Through FY2025's reported revenue decline of 5% to €80.8 bn, the substrate held its load: operating free cash flow grew 8% to €11.3 bn, Fashion & Leather Goods preserved an operating margin in the mid-thirties, and MaIA adoption became a board-level KPI under the 'AI for All' plan unveiled at NRF 2026.

The deeper move was the sequencing. When an operating model is federated by design, the substrate beneath it has to be centralized at the infrastructure layer and partitioned at the data layer simultaneously. Build the partition primitive into the substrate before any AI workload lands — or the operating units that own the data will not let the workloads land at all. LVMH ran the sequence cleanly: cloud first, partition first, training first, agents last. That is the case worth examining.

The Architecture: Single Substrate, Federated Data

Strip the marketing language about 'quiet tech' and 'AI amplifies craft,' and a familiar shape emerges underneath LVMH's AI strategy: build a single data substrate beneath the operating model first; let AI workloads land on top of it later. The doctrine itself is not novel — DHL ran a version of it a half-decade earlier, and it has become the canonical sequence for any large-enterprise AI program serious about leverage.

What's distinct at LVMH is the partition primitive. DHL is one operating reality; LVMH is 75. So the substrate that serves them cannot simply unify. It has to centralize the infrastructure — BigQuery on Google Cloud, Dataiku for MLOps, Vertex AI for application agents — while firewalling the data inside it on a per-maison basis. The Google Cloud account of LVMH's data architecture frames the design constraint plainly: the data has to be kept separated, differentiated, and secure across maisons. The technical wording is doing real architectural work, not marketing work. Group CIO Franck Le Moal has described the operating consequence: each maison runs its own customer relationships on its own data slice, and 'is still in charge of the implementation and support of their own general IT ecosystem.' What gets shared across the firewall is finance, HR, supply-chain benchmarks. Customer data, never.

The variant matters because the pattern only works if the partitioning is built in before the AI workloads land. A unified substrate retrofitted with maison-level access controls would have been litigated by maison CEOs into incoherence the day the first cross-maison workload tried to read data. The deeper sequencing claim is not just data before AI, but firewall before AI.

This is also why most multi-brand groups cannot simply copy the architecture. The luxury holding solves a problem that single-brand operators (Hermès) and federated-but-not-substrated holdings (Richemont) do not face: 75 customer views that must remain distinct because brand-customer specificity is the product. Unilever, Estée Lauder, and Inditex face structurally similar partition problems; few have the founder-CEO authority to enforce a firewall against business units that always lobby for cross-pollination. The architecture is structurally simple. The political cost is what most groups underestimate.

The Strategy in One Picture

Le Moal has described the sequencing in one sentence: 'We had built the core of our data platform in 2021, 2022, and that left us sitting at the ready when generative AI happened.' Build first, deploy later — that is the entire thesis, in his own words.

The LVMH–Google Cloud strategic partnership was announced in June 2021. The substrate was built through 2022. MaIA launched in 2024 atop a workforce that already had 1,500 trained data experts and 15,000 employees through the Data and AI Academy. By June 2025, Le Moal could report 40,000 monthly users. That is a four-year arc, and every step happened in that order.

What sits in each layer: the substrate runs on Google Cloud globally, with an Alibaba Cloud parallel ('LVMH ATOM China') that has been running since 2019 and was expanded in May 2024 for sovereignty reasons. The algorithm layer is the AI Factory, founded in 2020 under Director Axel de Goursac, building luxury-specific models — most notably scarcity-aware forecasting, which de Goursac has flagged as structurally different from standard fashion-retail forecasting. The workload layer is MaIA (internal-only), custom Vertex AI agents (Tiffany client outreach, Celine retail support), Kahoona predictive segmentation at Dior, OMI 3D digital twins at Guerlain, and trend recognition originally seeded by Heuritech (now via Luxurynsight after the November 2024 acquisition).

Notice what's missing: any direct customer-facing AI. LVMH explicitly does not put MaIA in front of customers. The substrate is the strategy; the customer-facing surface is deliberately not. That choice is what 'quiet tech' actually means in operational terms.

How It Actually Works — Three Closed Loops

Loop 1 — Client advisor.

Customer-360 in the data platform → Vertex agent → recommendations and outreach drafts surfaced to a sales associate → in-store interaction → behavior back into the platform. Tiffany has a client outreach agent that helps advisors craft personalized messages; Celine has a retail agent capable of answering complex floor queries; Louis Vuitton is piloting AI-generated thank-you drafts. Soumia Hadjali, LV's Global SVP for client and digital, framed the design crisply at NRF 2026: agentic AI is 'an intelligent layer to superpower our client advisor.'

The loop is deliberately not a self-driving sales agent. The AI surfaces; the human sells. That is the quiet-tech doctrine made operational — and it is also a structural defense, because client advisors are the place where brand-customer specificity actually lives. Replace client advisors with autonomous agents and the partition firewall above stops mattering — because the brand-customer specificity it was built to protect no longer exists.

Loop 2 — Demand forecasting and stock allocation.

Historical sales + market signals + scarcity constraints → luxury-specific forecasting algorithm → production scheduling → distribution allocation across stores. de Goursac has been explicit at industry conferences that the forecasting models had to be built specifically for luxury, because the constraint isn't 'don't run out,' it's 'manage scarcity as a feature.' Le Moal puts the scope plainly: 'forecasting, budget planning, sales planning, distribution planning, merchandising planning, and even production planning, are all units augmented by applications that use algorithms.'

This is the loop closest in spirit to UPS's data-as-cage logic — luxury operational exhaust no competitor can replicate, fed back into in-house algorithms. The difference: LVMH does not productize the model. It feeds the maisons.

Loop 3 — MaIA as internal productivity infrastructure.

Group-wide gen-AI agent → ~40,000 monthly users running 1.5–2 million queries per month → privacy-preserving inside the LVMH ringfence → re-trained on internal usage patterns. This is the loop most often understated in coverage. MaIA is not a product. It is the activation surface for the substrate itself — the way most LVMH employees actually touch the data platform, the place where adoption gets measured, the metric that tells whoever is reviewing the AI portfolio whether the substrate is working.

The deeper claim: the third loop closes when the substrate becomes the default tool for the workforce. ~18% workforce reach is what that looks like at month 18 of rollout.

The Numbers

LVMH's January 27, 2026 board released FY2025 results with revenue at €80.8 bn — organic −1%, reported −5%, with Q4 organic +1% (the recovery signal). Profit from recurring operations: €17.8 bn, operating margin 22%. Group share of net profit: €10.9 bn, down 13% year over year. Fashion & Leather Goods, the dominant division, held its operating margin in the mid-thirties on a 5% organic decline. Operating free cash flow: €11.3 bn, up 8% in a year revenue was down. Net financial debt cut to roughly €6.9 bn, down sharply from the prior year.

That last cluster — margin defense and free-cash-flow growth in a down-revenue year — is the load-bearing financial claim. Substrate-driven productivity is supposed to defend margin and cash exactly when growth slows. LVMH's FY2025 print says the substrate did its job.

On the AI substrate itself, the operative numbers:

MaIA: 40,000 monthly users; 1.5 million queries/month per Le Moal in his June 2025 Google Cloud interview, with WSJ reporting 2 million by mid-year. Both round to roughly 50 queries per user per month, ~2.5 per working day. Monthly reach: ~18% of LVMH's 211,000-person workforce.

Training: 1,500 data experts trained over four years; another 15,000 employees through the Data and AI Academy.

Maisons: 75. Largest by revenue, by industry consensus: Louis Vuitton (~€22–25 bn, ~30% of group revenue, ~43% of operating income).

Footprint: 6,307 stores globally end-2024; >211,000 employees end-2025.

Investment: Aglaé Ventures (Bernard Arnault's family office) deployed into five AI startups in 2024 — H, Lamini, Proxima, Borderless AI, Photoroom — with combined round sizes over $300 million. Adjacent to the operating company, but signaling priorities.

Named program owners: Franck Le Moal (Group IT and Technology Director); Gonzague de Pirey (Chief Omnichannel and Data Officer); Anca Marola (then Group CDO, architect of the Stanford HAI partnership; subsequently named Sephora's Global Chief Digital Officer in February 2024); Axel de Goursac (Director, AI Factory). The org reports up to Group Managing Director Stéphane Bianchi, who explicitly oversees Digital and Data Transformation in addition to several operating divisions. None of these is the CEO. The data and AI organization sits one level below Bernard Arnault and one level above any single maison — exactly the elevation the architecture requires.

Two million queries across 40,000 users is roughly 2.5 queries per user per working day — not transformative; well-adopted office utility. The load-bearing claim is the reach: 40,000 monthly active out of ~211,000, or ~18%. Most large-enterprise LLM rollouts plateau in the single digits of monthly active users. LVMH's 18% suggests that doctrine ('AI amplifies, never replaces'), mandatory training (15,000 Academy graduates), and the federated maison structure — running adoption as the KPI — have actually changed who shows up. Eighteen percent isn't ubiquity. It's 'AI is the white-collar default.' The retail floor is where the next test lives.

Is AI a Norm Inside LVMH?

de Pirey at NRF 2026: 'We invited every maison to have its own AI transformation plan, and when we looked at all these plans, we found a lot of commonality.' That sentence is the structural innovation, and worth slowing on. Centralization runs bottom-up — commonality discovered, not imposed — on top of forced top-down infrastructure. Most consultancies design the inverse: top-down strategy, bottom-up infrastructure. LVMH inverted it.

The norm test gets answered across multiple texture lines. Coverage: Tiffany, Celine, Louis Vuitton, Dior, Sephora, Bvlgari, Givenchy, Hublot, Moët Hennessy, Guerlain, Loro Piana, and Rimowa each have at least one named, deployed AI use case sitting on the shared substrate. Doctrine consistency through succession: from Antonio Belloni's November 2023 Stanford HAI announcement ('AI is a powerful technology... support and complement to human talent') through Stéphane Bianchi's April 2024 ascension as Group Managing Director through Pietro Beccari's January 2026 promotion to CEO of LVMH Fashion Group, the 'AI amplifies craft' line has held verbatim. Adoption is treated as the KPI rather than a vanity metric — every maison's transformation plan ladders to MaIA reach. Institutional anchors: the annual LVMH Data & AI Summit (700 maison leaders for the 2023 edition, NVIDIA and Google senior leaders on stage); Stanford HAI corporate affiliation since November 2023.

The succession test is the hardest one, and it's the one most substrate-led programs fail. Bernard Arnault is 77; shareholders raised the CEO age limit from 75 to 80 in 2022, then again from 80 to 85 in April 2025, allowing him to remain. All five Arnault children hold operating roles — Delphine as CEO of Christian Dior Couture, Antoine as CEO of Christian Dior SE, Alexandre as Deputy CEO of Moët Hennessy, Frédéric as CEO of Loro Piana (since June 2025; Stéphane Bianchi resumed oversight of LVMH Watches), Jean in Louis Vuitton watchmaking. Four of five sit on the LVMH board. But the data and AI organization explicitly reports up to Bianchi, who is not Arnault and not heir. That is the fact that matters. The substrate is institutionalized one level below the founder, in a role that is hireable rather than hereditary.

The deeper design move worth naming explicitly is the direction of imposition. Substrate is non-negotiable, top-down. AI use cases are bottom-up, generated maison-by-maison, then refactored into shared modules ('like Legos,' per de Goursac). Most multi-brand groups try one direction or the other and fail at both: forced central uniformity kills business-unit speed; fragmented BU experiments achieve no leverage. The structure that works — substrate enforced by founder authority, applications generated by maisons with operating autonomy — is what lets both layers function. Replace either side with a weaker form and the model degrades fast.

Why This Answer Fits LVMH

LVMH was founded in 1987 from the Louis Vuitton + Moët Hennessy merger; Bernard Arnault took control in 1989. Decentralization has been an Arnault doctrine for nearly four decades — maisons operate autonomously, share capital allocation through the holding, and answer to a founder-CEO whose family controls roughly 66% of voting rights via Christian Dior SE/Agache (per the most recent AMF disclosure). Structurally, this is the most decentralized luxury conglomerate in operation.

That structure creates a problem in the AI era. Each maison alone is too small to build a state-of-the-art data platform. But each maison's customer data is precious enough that pooling it without a firewall would erode the brand-customer specificity that is the product. Every multi-brand luxury group faces some version of the same problem; only LVMH has the founder authority to solve it through the partition substrate.

By contrast: Hermès is single-brand, family-controlled, vertical artisanal. There is no maison-to-maison aggregation problem to solve, and no substrate to build. Hermès' AI strategy is governed innovation — central guardrails, no shared data platform. Kering is structurally closer to LVMH but historically less group-cohesive; AI activity has been brand-led from Gucci, not platform-led from the holding. Richemont has invested in forecasting (Cartier's documented stock-management gains) but operates closer to a federation of independent maisons than a substrate-organized group.

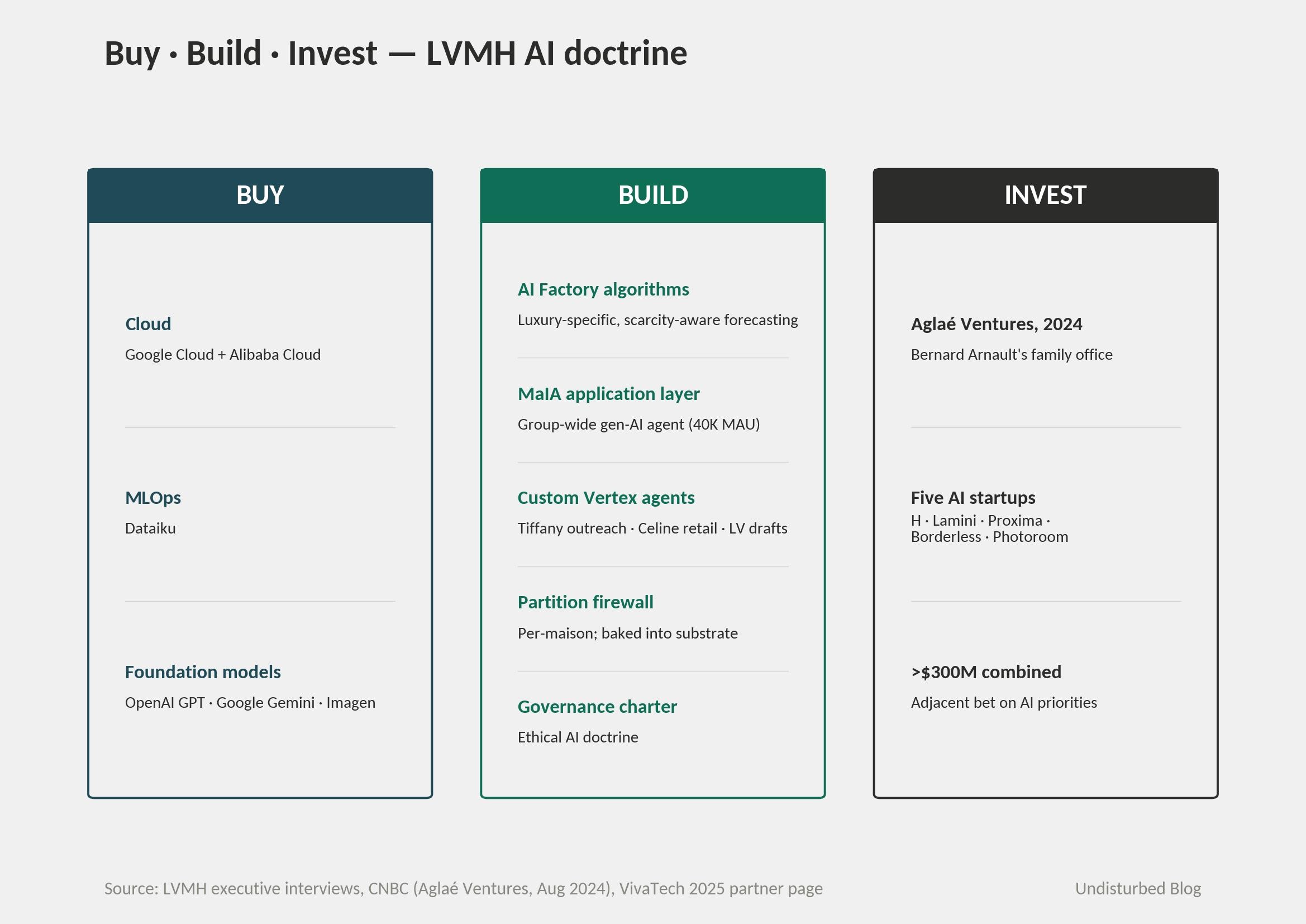

Make-or-buy decisions follow the doctrine cleanly. Buy: the cloud (Google Cloud + Alibaba), MLOps (Dataiku), foundation models (OpenAI GPT alongside Google Gemini and Imagen). Build: the AI Factory algorithms, the MaIA application layer, custom Vertex agents, the partition firewall, the governance charter. Invest: through Aglaé Ventures into five AI startups in 2024 alone. The split is unusually disciplined. LVMH does not try to build infrastructure; it does not try to buy applications.

Why now: post-pandemic luxury normalization (the 2020–23 price-led growth running into consumer resistance), softer Chinese demand, US tariffs reshaping the cross-Atlantic margin equation, agentic-commerce intermediaries threatening to insert themselves between brand and customer. Le Moal flagged the last directly to WWD: 'We don't plan to put chatbots on all our websites… These are luxury sites, after all, and we prefer human interaction.' This is exactly the moment when an operational substrate that lets every maison forecast better, allocate better, and clientele better has its highest marginal value.

The substrate-first sequencing is transferable to any multi-brand holding — Unilever, P&G, Estée Lauder, Inditex, Marriott. What does not transfer is the activation energy: a single founder-CEO with roughly two-thirds of voting rights, a 40-year time horizon, and operating heirs in five operating divisions. Most multi-brand groups have neither the patience to spend three years building substrate before AI workloads land, nor the centralized authority to firewall data between business units that always lobby for cross-pollination. The architecture is data-first sequencing; the precondition is founder-CEO authority over a federated operating model. The two should not be conflated. The structure is simple. The political cost is what makes it rare.

Where It Goes Next

de Pirey announced an 'AI for All' next-phase plan at NRF 2026 — extending AI literacy beyond the white-collar 18%, with adoption as the KPI. Hadjali frames agentic commerce as something Louis Vuitton wants to orchestrate across its own ecosystem (stores, app, cafés, exhibitions) rather than expose to third-party agents. Le Moal is more openly defensive about the consumer-facing surface, restating the doctrine bluntly: LVMH does not intend to put chatbots on its websites at all.

Three things to watch through 2026 and into 2027:

Whether MaIA reach moves. Eighteen percent is 'white-collar default.' Pushing to 40–50% requires reaching the retail floor, where the doctrine ('AI amplifies the human') is hardest to operationalize without flattening client-advisor judgment. Hadjali's NRF framing — agentic AI as 'an intelligent layer to superpower our client advisor' — is a careful design choice; the question is whether it ships.

Whether the firewall holds. Any reported incident of cross-maison data leakage would be the first proof point of substrate weakness. The 2025 Louis Vuitton breach (419,000 Hong Kong customers exposed; multiple country extensions through July) tested the security perimeter but not the partition primitive. The latter test has not yet come.

Whether sovereignty exposure forces a posture change. Le Moal: 'we're also increasingly vigilant about a form of American sovereignty.' LVMH has hedged with Alibaba Cloud in China; the European hyperscaler question (Thales/Google JV, sovereign-cloud options) remains open. Single-hyperscaler dependency is the substrate-level vulnerability, and LVMH is among the few large enterprises in this architectural class that has named this risk publicly. If a European sovereign-cloud option matures during the relevant horizon, LVMH is the first plausible anchor tenant.

The DFS Greater China divestment to China Tourism Group (announced January 2026) narrows operating scope, which should simplify the substrate going forward. The harder question is whether the next leg of value comes from extending substrate breadth (more maisons, more data sources, more sovereignty hedges) or substrate depth (better models, more loops closed). LVMH's stated priorities suggest both. The numbers will say which actually paid.

The Diagnostic Frame

The pattern is structurally simple but rarely succeeds in the wild. Four conditions tend to separate the multi-brand groups that pull this off from the ones that stall.

Substrate before workloads, not in parallel. Groups whose business units sit on a single shared data substrate before AI workloads land tend to scale; groups that try to retrofit access controls onto an already-deployed AI program tend to die in litigation between BUs. The order of operations is non-reversible.

Reach as the load-bearing number. The diagnostic that travels is not productivity gains from a vendor case study — it is reach, measured as percentage of workforce as monthly active users, run as a board-level KPI. Single-digit reach signals that the doctrine has not become operational. Eighteen percent and rising signals that adoption has crossed into the default-tool zone.

Bottom-up commonality on top of top-down infrastructure. Groups whose AI use cases originate at the business-unit level and are then refactored into shared modules tend to scale; groups that impose central AI directives downward tend to stall at the first political fight with a strong-willed BU head. The substrate is non-negotiable. The applications are not.

Slowing growth as the activation moment. Substrate-first sequencing is most valuable when revenue growth is slowing — exactly when the temptation is to underinvest. If the substrate work is being deferred to a better year, the better year will not arrive.

References